April has been a busy month for the DemandSphere team.

As mentioned in my previous post, I spent a week with our Tokyo team earlier this month, where we held another FOUND Meetup. I also spoke at the Tokyo Digital Marketers Meetup the next day.

Next up was brightonSEO.

Denny and I arrived in London for some client meetings and then we traveled to Brighton the day before the event began.

This event was the biggest so far, with over 5,000 attendees according to the brightonSEO team. We met so many brilliant SEOs and marketers and made a lot of new friends.

It was the first time we had a booth at the event and we will be exhibiting and sponsoring again at the San Diego event this coming November.

I spoke on Thursday about SERP analytics, and my presentation was titled “Going beyond ‘what happened’ in SERP analytics.”

Photo credit: @spanskseo

This was also the first time that we had a booth at brightonSEO and it was so nice to meet the amazing people who stopped by to chat with us. There were many in-house and agency SEOs interested in what we have to offer.

We will have a booth and I will be speaking again at the upcoming brightonSEO San Diego this November as well. If you’re on the fence about attending, I would say do it. It is always an amazing time.

I’ve shared my slides on SpeakerDeck, and I’ll provide an overview in this post to go along with the slides.

This presentation was a little different in that I spent less time digging into data and more on the philosophy of how we view SERP analytics and why I believe we need to evolve this practice.

The problem with the way companies handle SERP analytics in many instances is that the only question being asked is “what happened?”

This is evident in the types of metrics we see: what is my rank? What is my competitors rank? What is the trend over time?

Not enough time is spent on the questions that will lead to action, starting with the question “Why?”

Let me provide an example.

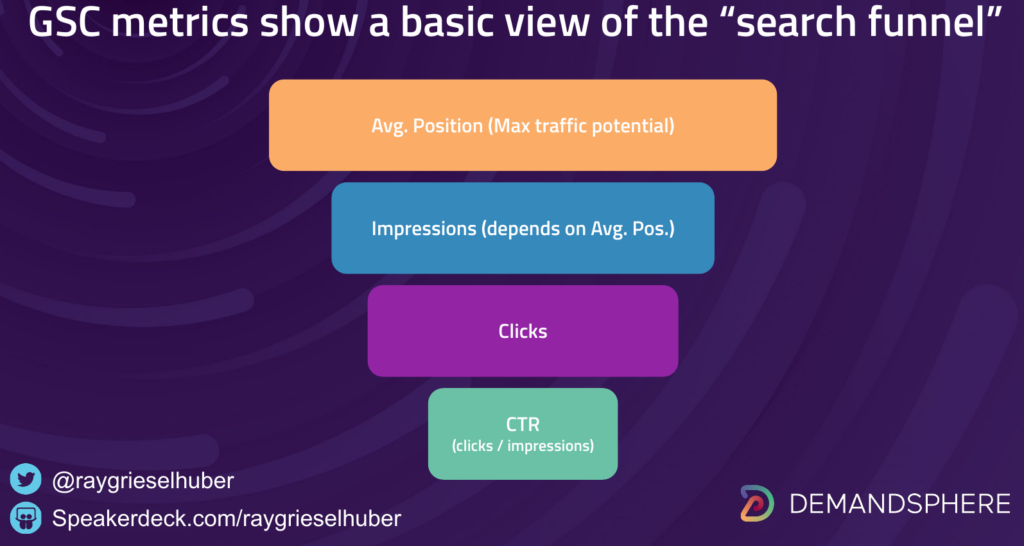

Think about Search Console and the core metrics that it provides as performance data: average position, impressions, clicks, and click through rate (CTR).

These are all “what happened” metrics.

Weirdly enough, these metrics don’t really tell us anything beyond what we were analyzing back in the days of the “ten blue links.”

As SEOs, we all have some version of this basic search funnel in mind when we think of GSC data:

Your average position is correlated to the number of impressions you have, then you have clicks, and there’s your data.

So what’s the problem?

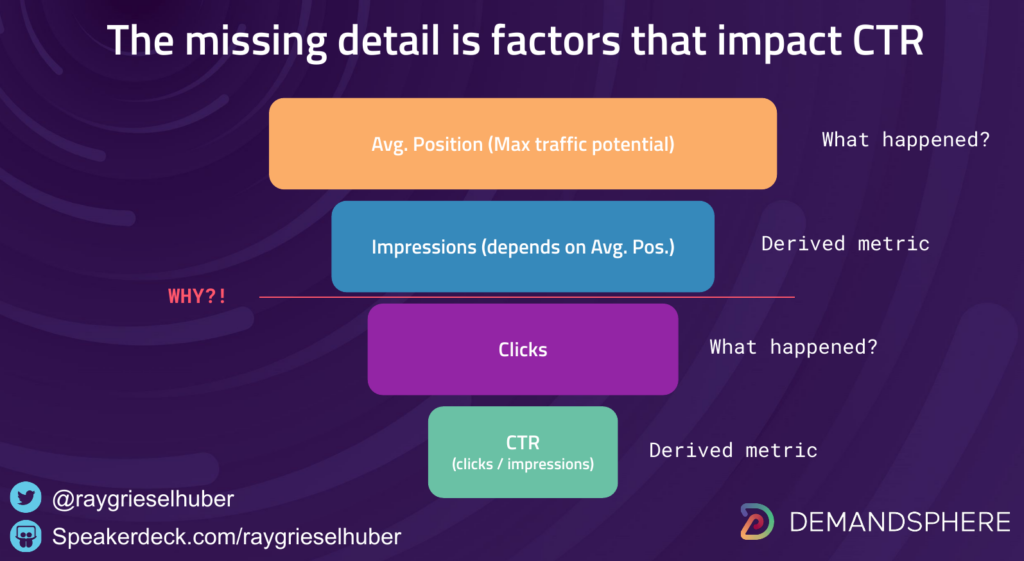

The problem is that these metrics tell you nothing about WHY your CTR is what it is.

Ok, so that’s actually a huge problem because management wants to understand why clicks are down even when rankings are up.

More importantly, teams need to understand for themselves WHY CTR is impacted by more than average position and impressions and WHERE the best opportunities are.

For this, we need to go beyond traditional rank tracking.

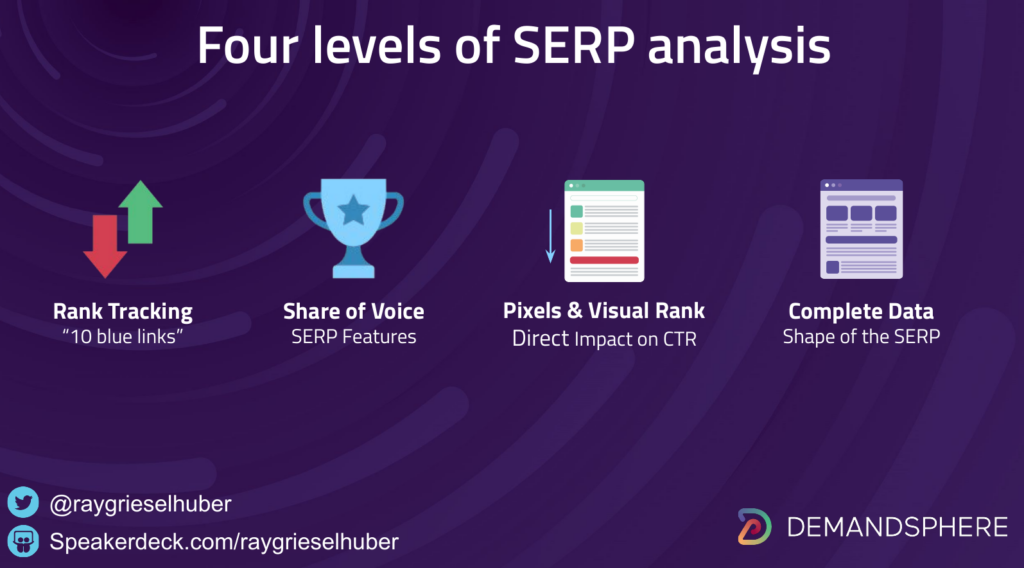

The next evolution of rank tracking does a better job at this.

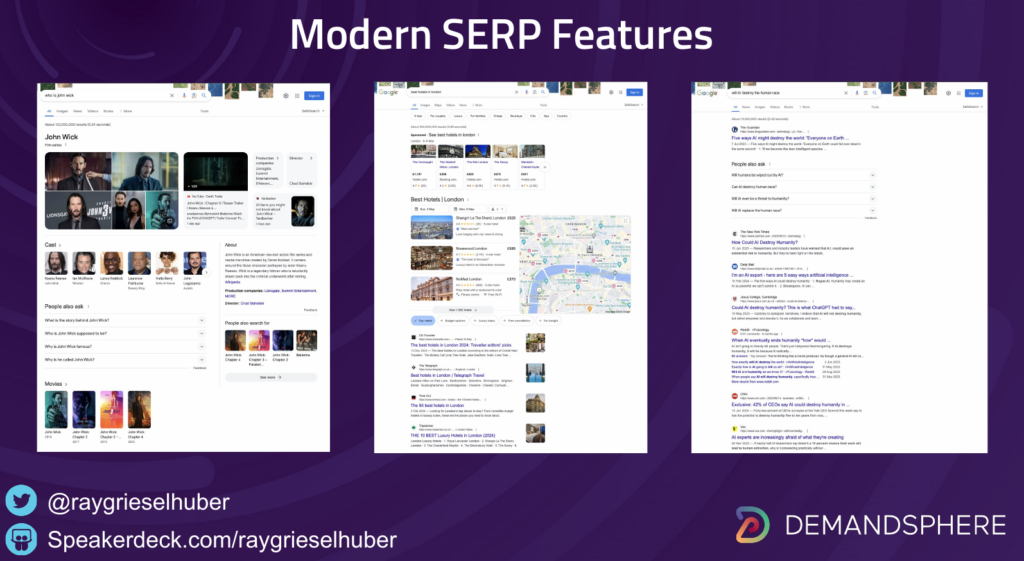

We all know that the modern SERP is very rich and constantly evolving. Google is having an identity crisis, they can’t decide if they want to be a search engine or purely a destination site to sell ads and conversions.

These SERP Features impact what the searcher sees visually to the point that organic rankings might not actually change that much but the CTR impact is very real because of the shape of the SERP visually.

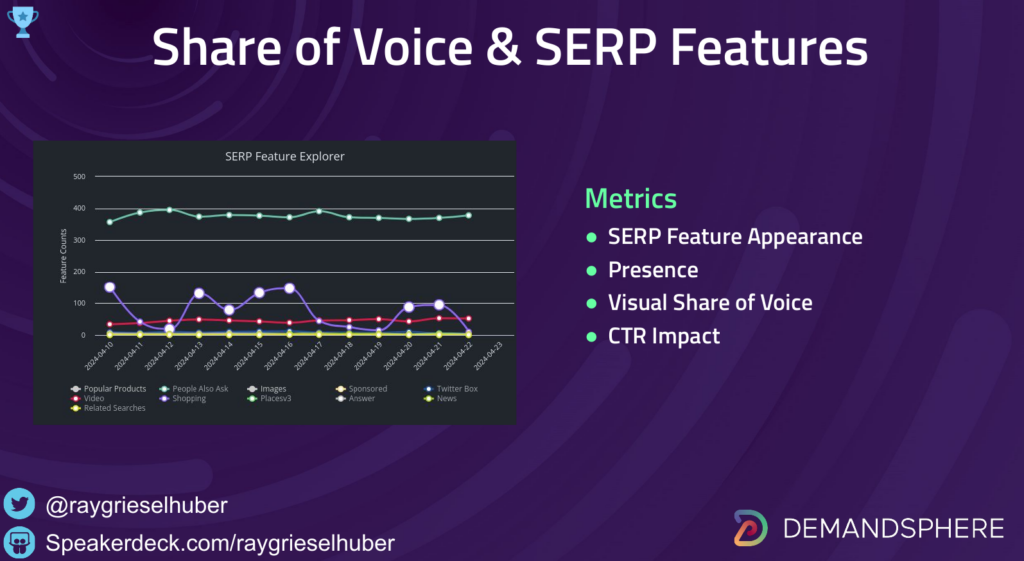

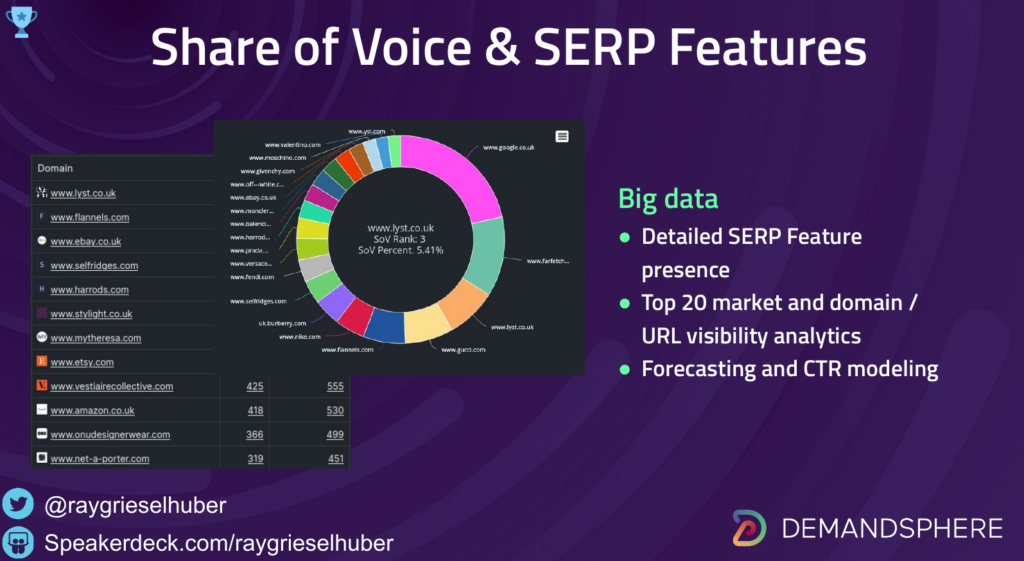

In response to the proliferation of SERP Features in search results, platform vendors such as ourselves and others made the first shift from mere rank tracking to SERP monitoring. In DemandMetrics, we built out features that provide the capability to filter for the presence of SERP Features and highlight when a client domain is appearing in them.

Additional metrics such as visual share of voice (VSoV), which provides insight on the potential CTR impact of these SERP Features, helps analysts to get clarity around who is really winning the SERP, which simple ranking metrics would not be able to explain.

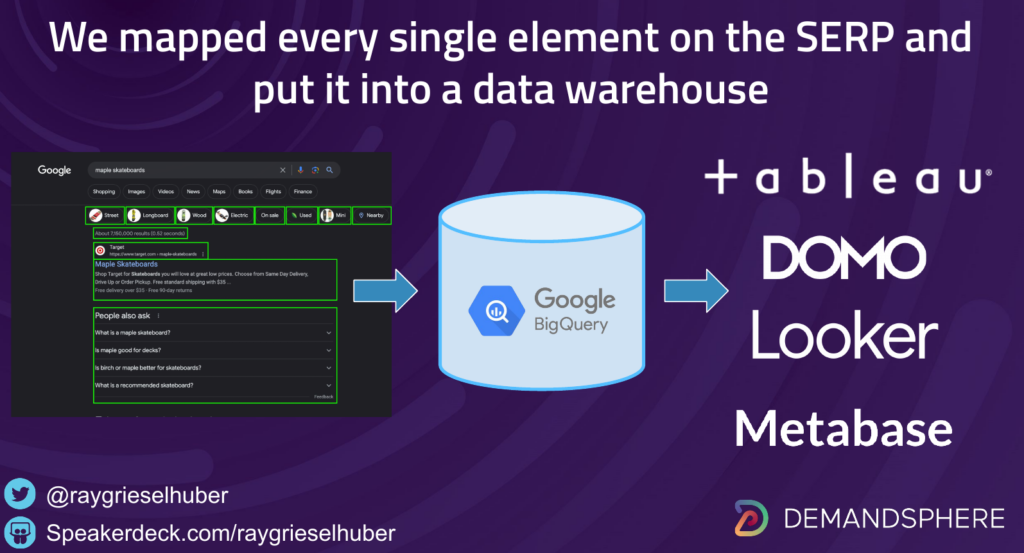

This level of SERP monitoring is always when you really start to see big data analytics emerge. When you start working with all of the SERP Features in addition to the ranking organic URLs, you’re dealing with large datasets, and this is typically when you’ll start to see teams adopting some form of data warehouse tech such as BigQuery. We work with a lot of database platforms but BigQuery has quickly become the standard way to share large datasets between teams and companies.

The more you can include this level of granularity in your traffic planning models, the better.

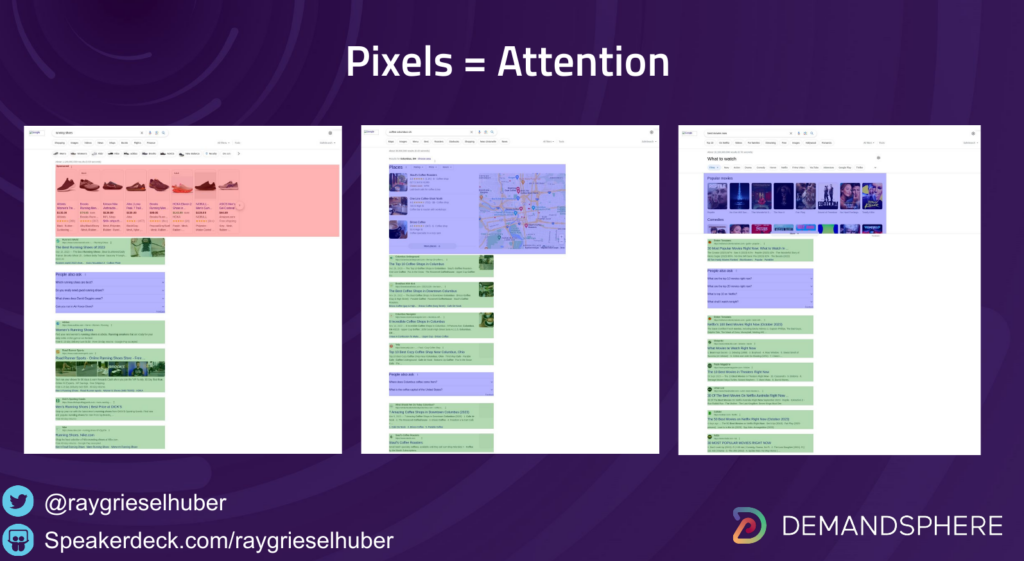

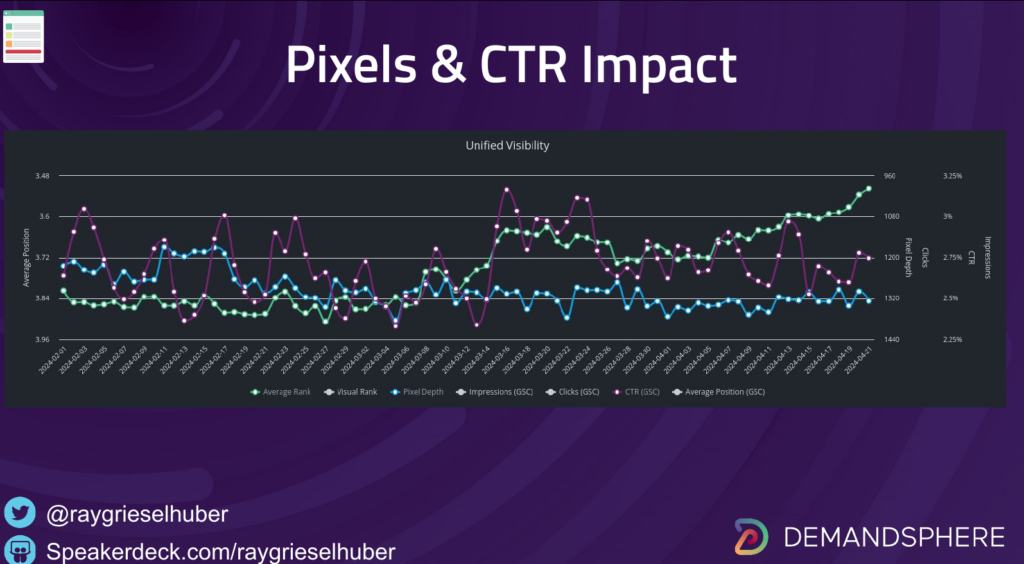

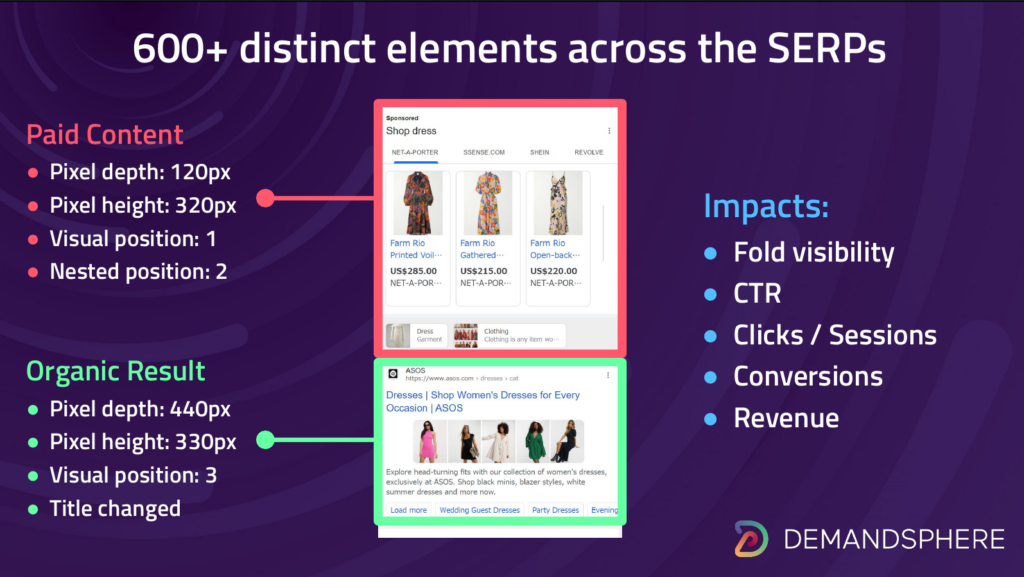

Now we move into the third level of SERP monitoring and this is where things really get interesting in tracking the evolution of the SERPs: pixel monitoring.

When we talk about pixel monitoring on SERPs, we’re talking about measuring every pixel on the SERP and using this to provide analytics around what the searcher is likely to focus on. Since most SERPs have a strong vertical orientation, pixel metrics are generally oriented around the depth of a ranking position from the top.

We show this by looking at pixel depth and also visual rank (VR). Visual rank is the ranking on the page from the perspective of the searcher. Most searchers are not SEOs and so aren’t always thinking in terms of “this is an ad, this is a SERP feature, etc.” (which is why Google has an advertising business). They simply look at the search results and tend to pick what is on top. Visual rank is what captures this reality, it is a user-centric measurement.

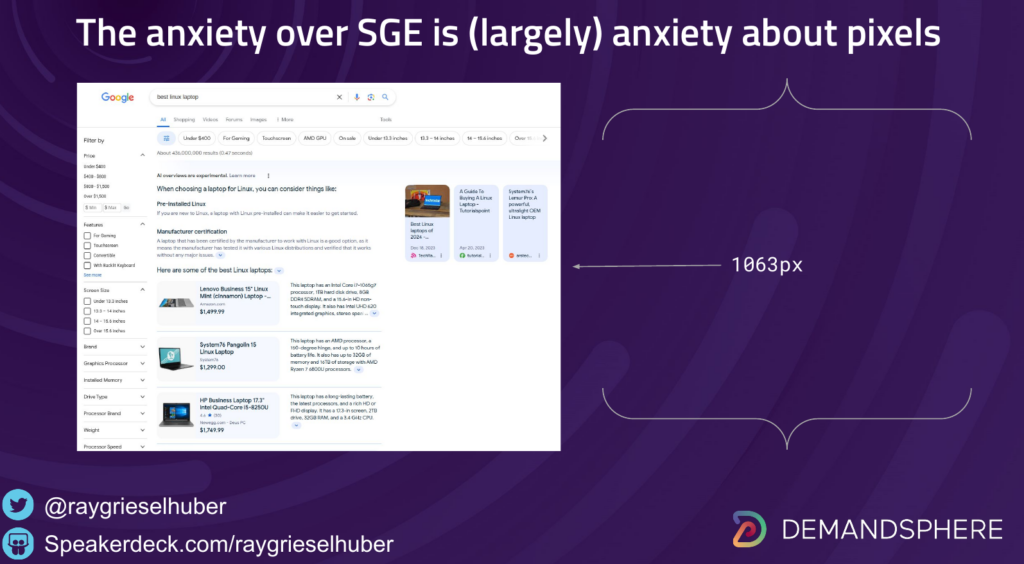

During the presentation, I used this part of the discussion to take a brief segway into Google SGE. I didn’t want the presentation to turn into a discussion on SGE as we already have plenty of those, but the anxiety around SGE does highlight the fact that much of the anxiety around SGE is anxiety about pixels. SEOs know that SGE has the potential to further squash, visually speaking, organic results.

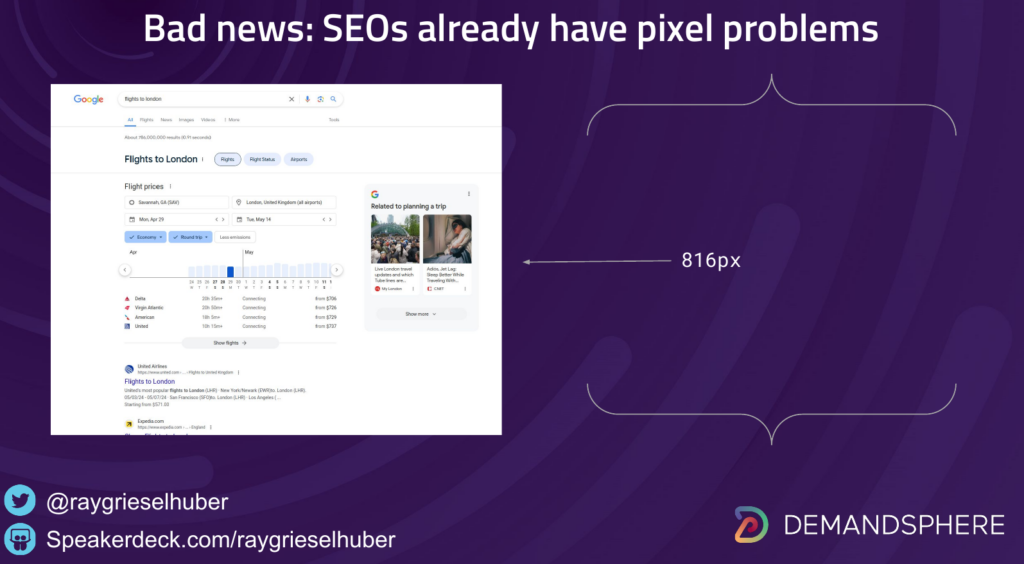

I used this opportunity to note that SEOs already have pixel problems and have had them for a long time.

That is not to say that we shouldn’t think about SGE or what the impact is going to be. But all of this needs to be considered holistically and strategists at the highest levels of organizations need to be aware that while search itself will never go away because it is a behavior primitive, it will continue to evolve quickly.

The way to adapt in fitness landscapes that are always evolving is through reliance on data, not just in analysis but in day-to-day operations. I have worked with hundreds of the most elite companies in the world in my career and the biggest differentiation between the leaders and rest is this operational capability.

The Holy Grail for our customers as we built out this type of analysis sounds simple but was a remarkably long path to getting to the point of being able to demonstrate, with data, exactly why CTRs with divergent with top placement in organic rankings.

This is how the concept of Unified Visibility was born.

It aims to go back and correct that missing gap between impressions and clicks in Google Search Console.

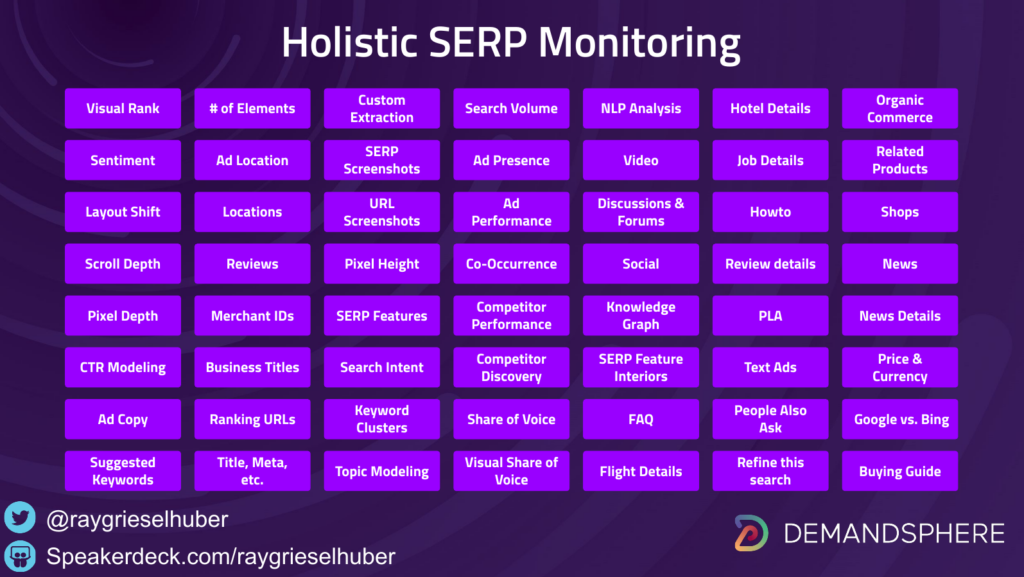

The fourth and (currently) final level of SERP analytics is what is known as holistic SERP analytics.

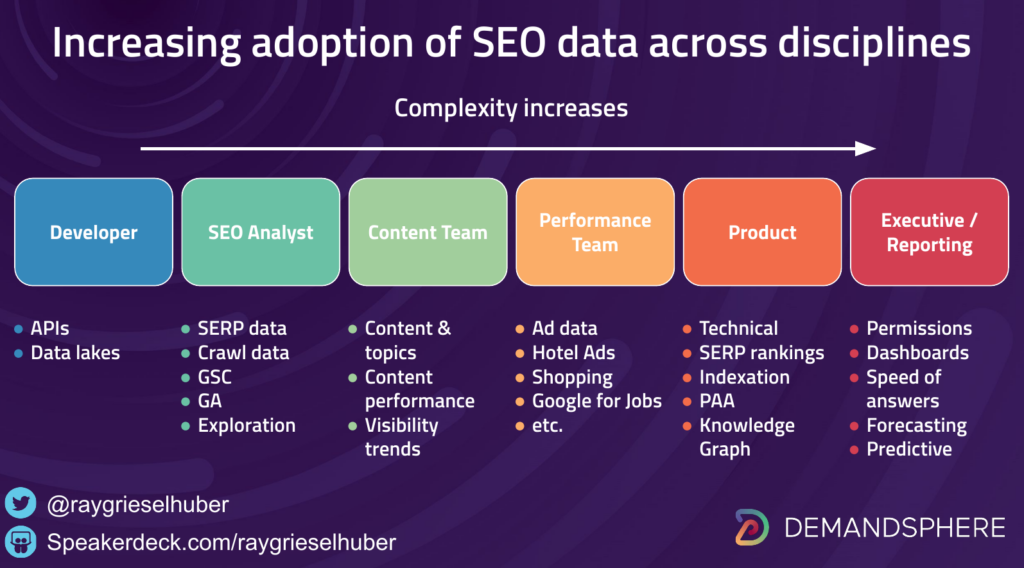

Holistic SERP monitoring goes even beyond visual analytics into turning the entire shape of the SERP into a large-scale, queryable dataset that can be used by many teams.

This is where we are seeing a lot of growth in adoption.

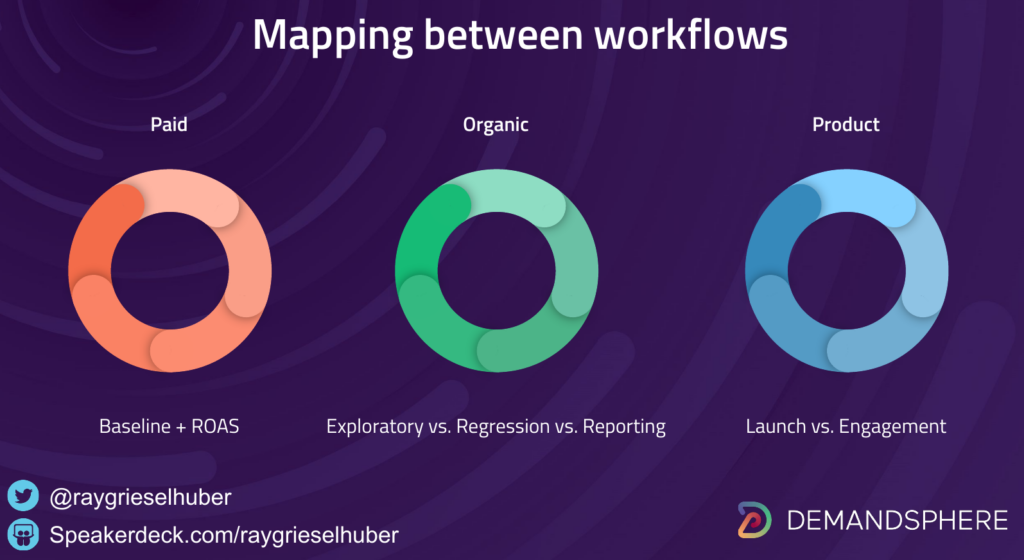

SEO teams are being enabled to explain the impact of the whole SERP not just to their own performance marketing teams but also to product, development, and executive teams.

This level of data comes with challenges, however, because the dataset is exponentially larger.

By our own measurements, there are at 600+ (and quickly growing) distinct elements within the SERPs that can be modeled.

All of these elements, to varying degrees, have an impact on searcher behavior.

The way we opted to solve this problem, as mentioned above, is by turning to BigQuery for managing this data.

This provides some advantages and also some challenges.

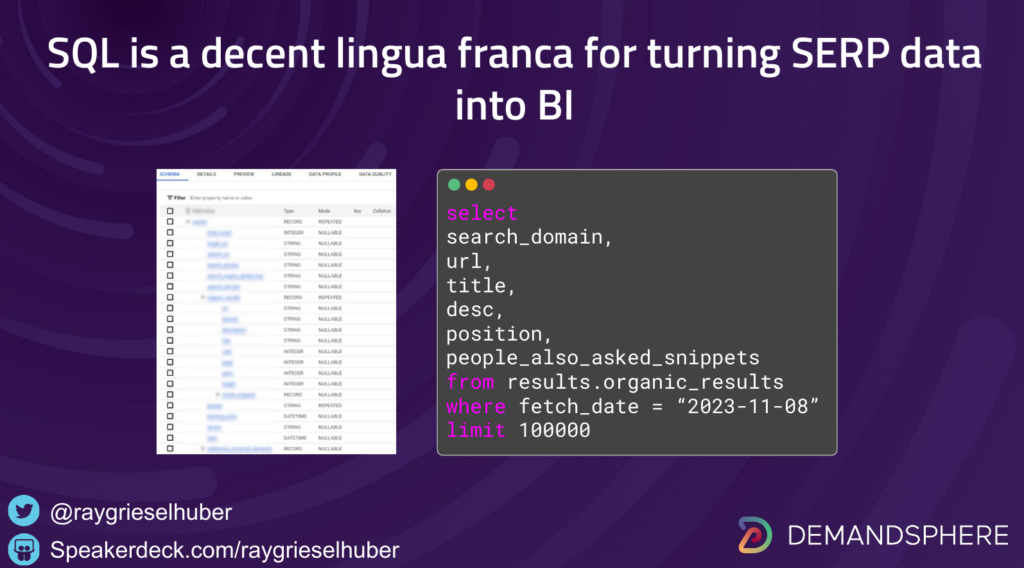

As most SEOs will know, SQL is the lingua franca for all analytics and pretty much any quality BI tool will allow you to join disparate datasets using a combination of SQL analysis and visualization tools.

So it made sense to standardize on this.

The challenge that this presents is that not everybody is a data engineer or even a SQL-fluent analyst.

An additional challenge is that once the entirety of SERP data is available in this format, you quickly run into an explosion of use cases. As mentioned above, those use cases explode when you cross team boundaries into other groups such as product management, development, and so on.

There are a number of ways to solve this problem. One simple solution that we arrived as was simply to build a library of queries in our documentation and BigQuery / Google Colab notebooks that provide instant access to the data, so you can worry less about understanding the whole schema and simply what you need to focus on.

So, during the presentation, that was my explanation of the Four Levels of SERP analysis.

You need all four.

We all still rely on simple organic rankings all the time because this represents the current state of Google’s index and ranking system.

This changes daily, of course, so the more often you monitor, ideally daily, the better.

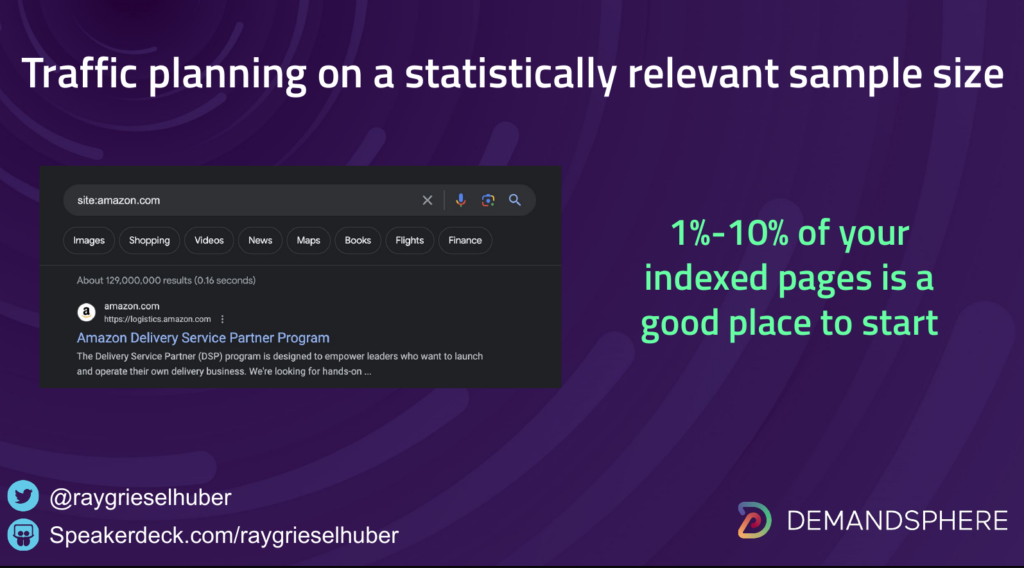

There are additional questions that arise from this statement and, one of the most common is, what sorts of keyword volumes should be monitored? The most relevant aspect to this question, of course, is cost.

Questions of statistical relevance can vary in determining sample size and should be determined at the strategic level by looking at the margin of error, confidence level in the results, and variability.

This can be achieved by combining large-scale, one-time pulls of SERP data and then testing your sample size for these factors.

But if you’re just looking for a good rule of thumb to start with, for larger sites in particular, choosing somewhere between 1-10% of your indexed pages and using that as your keyword volume, can be a good place to start.

So, for example, if your site has 500,000 indexed pages, then anywhere from 5K-50K keywords monitored daily is not unreasonable. This is subject, of course to the above caveats about determining proper sample size.

This topic alone is probably worth its own presentation.

So, why does all of this matter?

It’s all about the users.

It always has been and always will be about human attention.

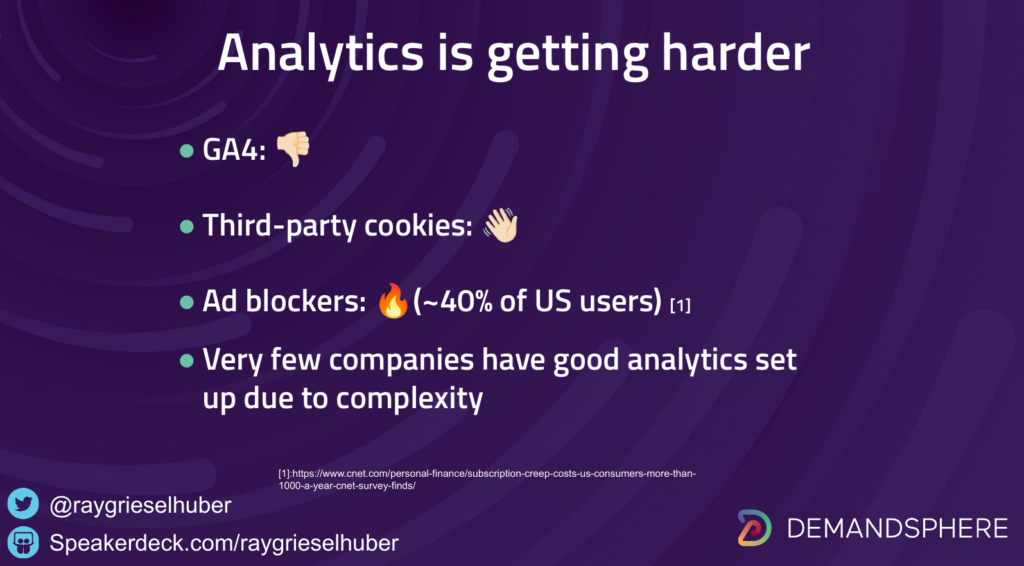

There are many challenges today in measuring human attention because analytics itself is becoming much harder than it used to be. I can’t tell you the number of companies who are struggling with getting a good analytics setup internally.

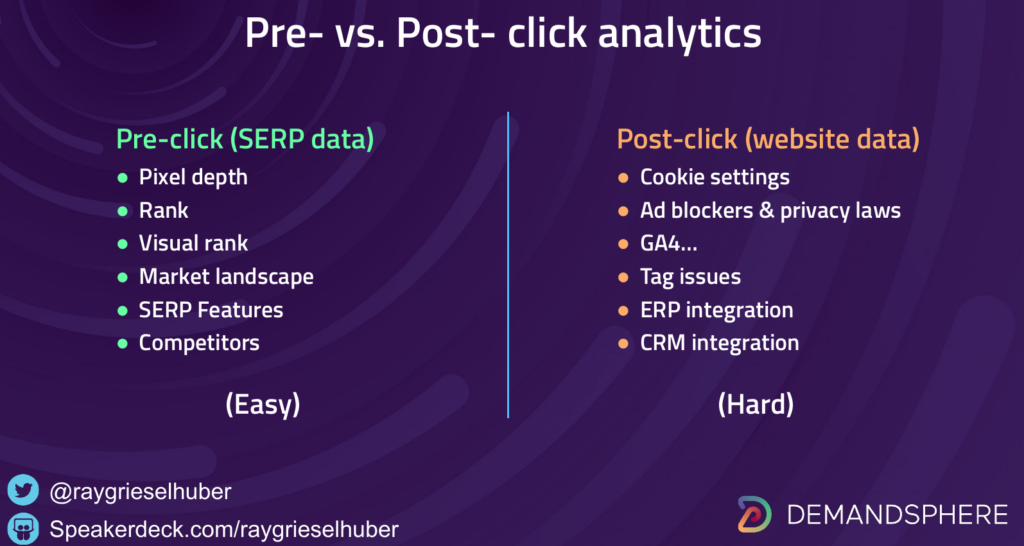

Interestingly enough, all of these challenges are analytical challenges that are happening post-click, after the searcher clicks on a link to your website and does something during their visit.

This brings us to the enormous opportunity that SERP analytics presents: pre-click analytics.

With the rise of zero click searches as well as the factors mentioned above, there is an almost infinite resource of data available to mine that accurately predicts user behavior long before they would ever click into your site (if they ever do).

This is the real value of SERP analysis and this is the reason it goes way beyond the boundaries of what is traditionally considered SEO. This is the reason that smart product managers and founders are all over this data.

This is why I say that once we move from what into why, the next question is who, as in who should be using this data.

In our experience, product and ad teams are the most natural adjacent teams for SERP analytics for both obvious and non-obvious reasons.

One of the most important and perhaps non-obvious reason is a mantra you will hear me repeat often:

Most SEO problems are corporate strategy problems.

We see this all the time in customer interactions. Here is a concrete example.

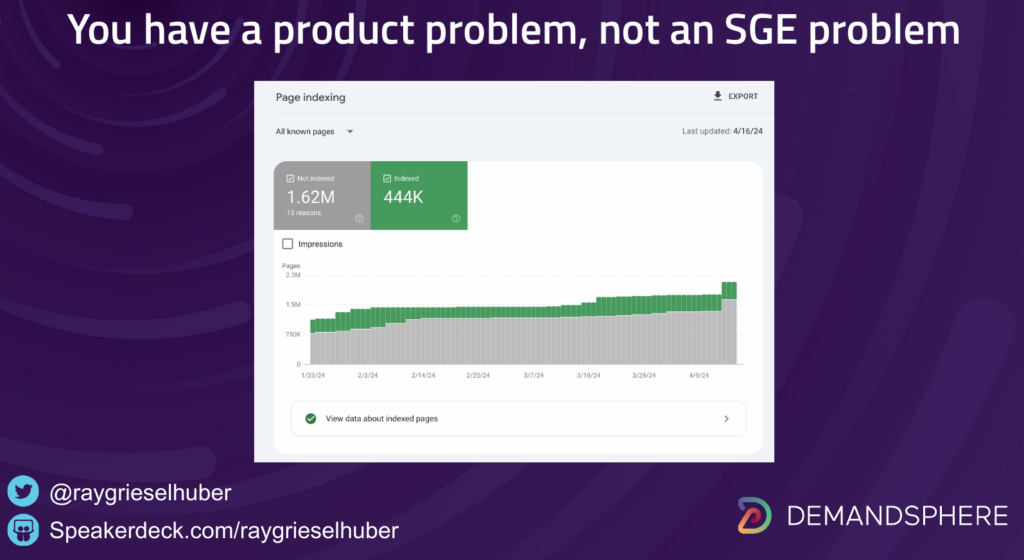

This probably looks familiar right?

It seems like everybody is struggling with indexation lately.

I explained why in my blog post on the Impact of Compute.

The short version is that Google is freaking out internally about the amount of computational power they will need in coming years.

This is why they are doing everything they can to crawl and index sites less than they were before.

I talked about this at our FOUND meetup in Tokyo earlier this month.

Going back to the product impact: one of the biggest reasons that indexing is taking a nosedive is that many websites are too slow. This is the real impact of Core Web Vitals. (CWV)

A few years ago, everybody in SEO was worried that CWV was going to somehow be included in “the algorithms” the determine search rankings.

But the impact of CWV is far more fundamental.

Website performance issues may or may not have some impact on rankings but the real question is if they are causing your web pages to not be indexed in the first place.

The issues that are affecting performance, internal navigation, user engagement metrics, conversions, etc. are ALL product issues.

So now we see the deep connection between SEO and product management.

This is the reason that you will also hear me often repeat another mantra of ours:

Good SEO is good product management, and vice-versa.

SEOs, as a whole, have an amazing opportunity in their careers to spread this message.

It will turn them into far more strategic assets for their companies.

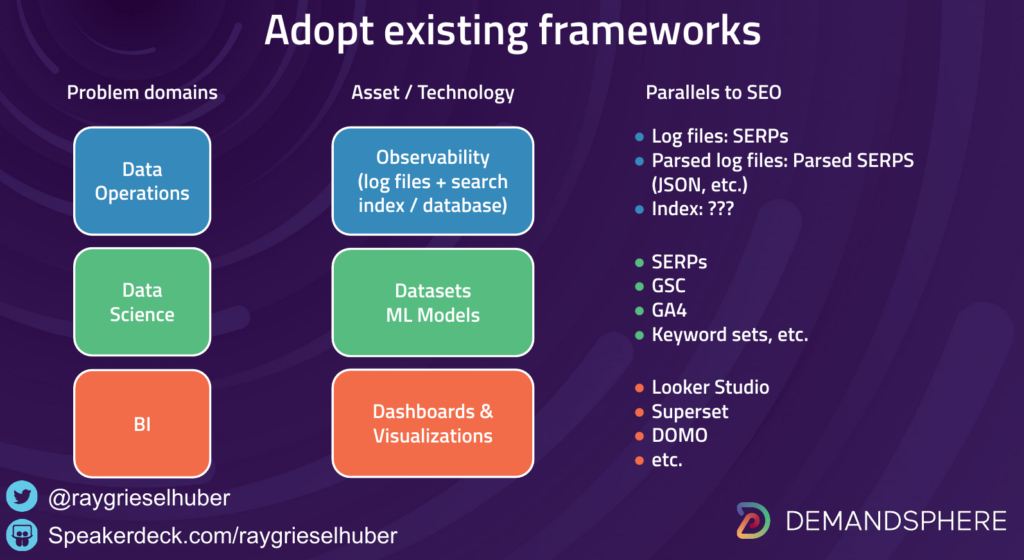

This is one of the reasons that I recommend that SEOs learn to speak the languages of data science, analytics, and business intelligence, because these disciplines are receiving a ton of investment and attention from executive teams.

Executive teams may not understand SEO (although it would behoove them to educate themselves), but they do understand the value of data, intelligence, and product strategy.

This can be achieve quite effectively through the use of the simple tools that I have mentioned above, including data warehouses, SQL, and business intelligence platforms.

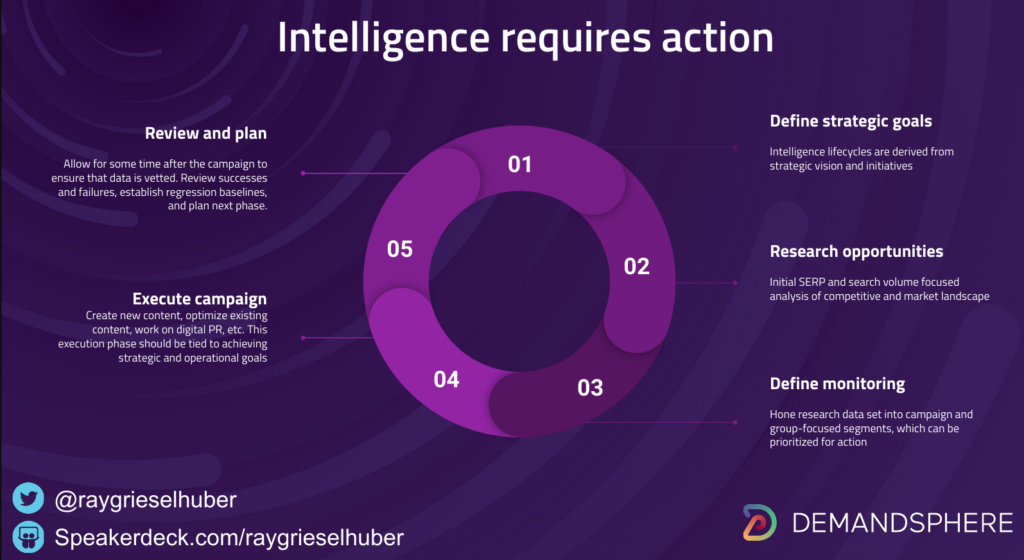

The key to making business intelligence work is to understand the intelligence lifecycle.

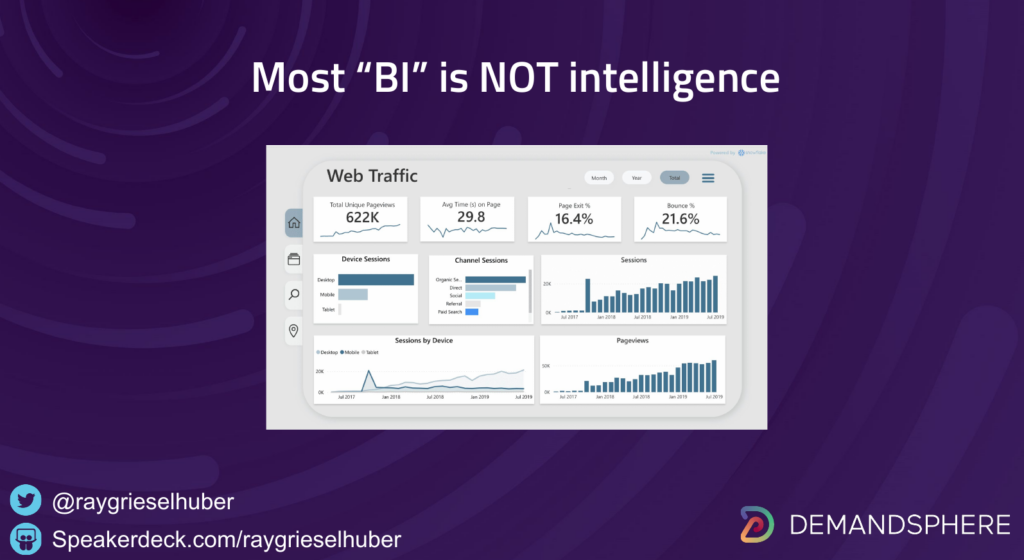

Unfortunately, most of what passes for “business intelligence” is not even remotely intelligence. It is simply a bunch of dashboards.

The intelligence lifecycle has been defined in the military and security worlds quite effectively through frameworks such as F3EAD (another post I will write soon).

The main point of the intelligence lifecycle is that, and it doesn’t really matter which framework you choose as long as it includes operational capabilities, is that it always results in a well-defined action that meets the strategic objectives defined at the outset.

The best companies I have worked with understand this at the most fundamental level of their corporate strategy.

There is no time wasted on “convincing management of the value of SEO.”

The executive team at these high performing companies has organic search built into their DNA.

They always go on to be category leaders.

This is the reason that organic search performance can and should be considered to be the leading indicator of long-term success for businesses that depend on organic traffic for their customer acquisition.

It boils down to a simple question for CEOs: how badly do you want to win?

The answer will be apparent in your SERP data.