Prompt Research

Discover the Prompts That Matter

Suggestions Explorer mines prompts from PAAs, LLM responses, and synthetic queries - without unreliable clickstream data. Understand what your audience is asking AI.

LLM prompts to track, categorized by intent.

25 prompts for competitive monitoring - categorized as Product, How-to, Comparison, Service, and Feature. Select and track with one click.

Mine Prompts From Multiple Sources

Our multi-source approach ensures comprehensive prompt discovery across the AI search landscape.

Where We Mine Prompts

Four distinct sources combine to give you comprehensive prompt intelligence.

People Also Ask

Questions Google surfaces as related queries - proven user intent signals directly from search behavior.

LLM Responses

Questions and topics extracted from actual AI-generated answers across ChatGPT, Perplexity, and more.

Synthetic Generation

AI-powered query expansion that generates semantically related prompts based on your seed topics.

Query Fanouts

Sub-queries that LLMs generate internally when processing user prompts - the hidden search layer.

Why We Don't Use Clickstream Data

Many prompt research tools rely on clickstream data to estimate "prompt volume." We deliberately avoid this approach because we believe clickstream sources are not currently reliable for AI search research.

- Sampling bias: Clickstream panels dramatically under-represent certain demographics and geographies, skewing volume estimates.

- AI chat opacity: Most clickstream tools can't see inside ChatGPT, Claude, or Perplexity conversations - they only see domain visits, not prompts.

- Extrapolation errors: Projecting from small panels to "total volume" introduces massive uncertainty that's rarely disclosed.

- Privacy erosion: Clickstream data collection raises significant privacy concerns that conflict with our values.

Relevance Scoring

Every suggested prompt is scored for relevance to your seed topic, helping you prioritize high-value content opportunities.

Topic Clustering

Automatically group related prompts into themes. Identify content pillars and subtopic opportunities at scale.

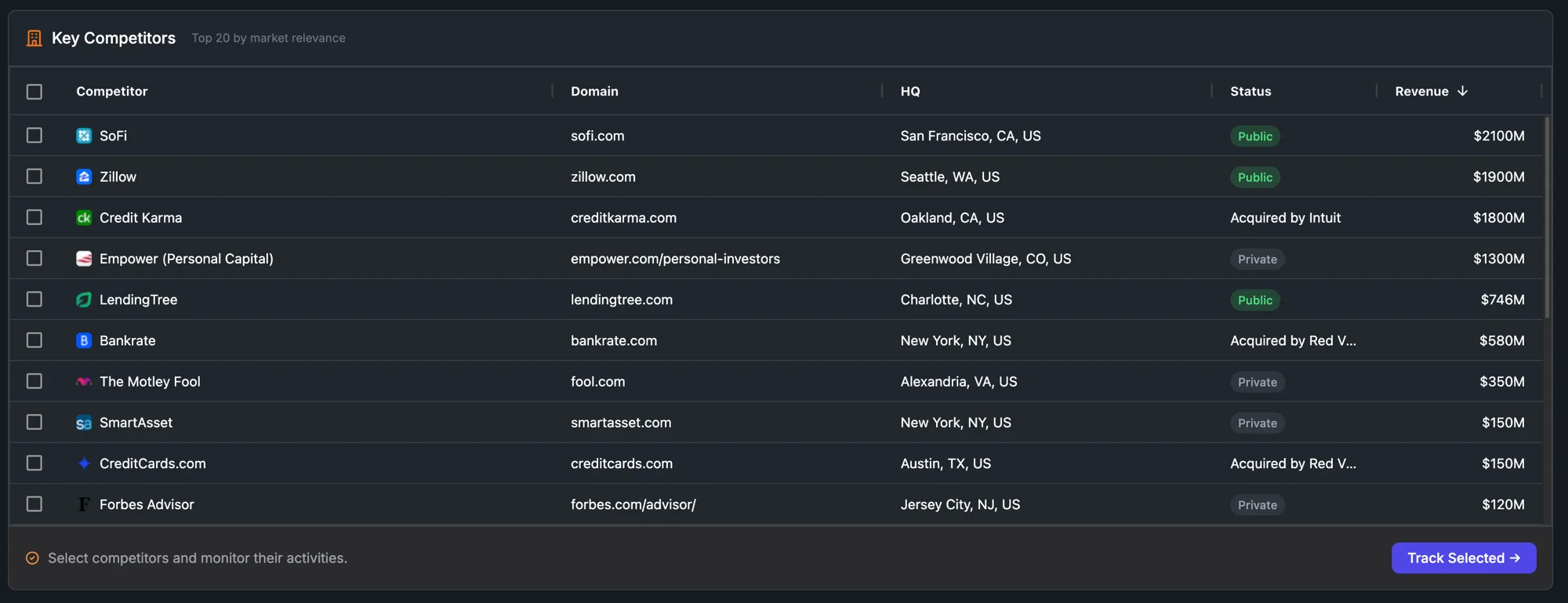

Competitor Analysis

See which prompts competitors are visible for. Find gaps where you can create content they haven't addressed.

Trend Detection

Track emerging prompts over time. Catch rising topics before they peak and create content early.

Bulk Export

Export thousands of prompts to CSV or directly to BigQuery. Feed your content calendar, brief writers, or AI tools.

API Access

Programmatic access to Suggestions Explorer. Integrate prompt research into your content workflows.

See it with your own data.

30-minute demo. We'll run it on your domain - no prep required.